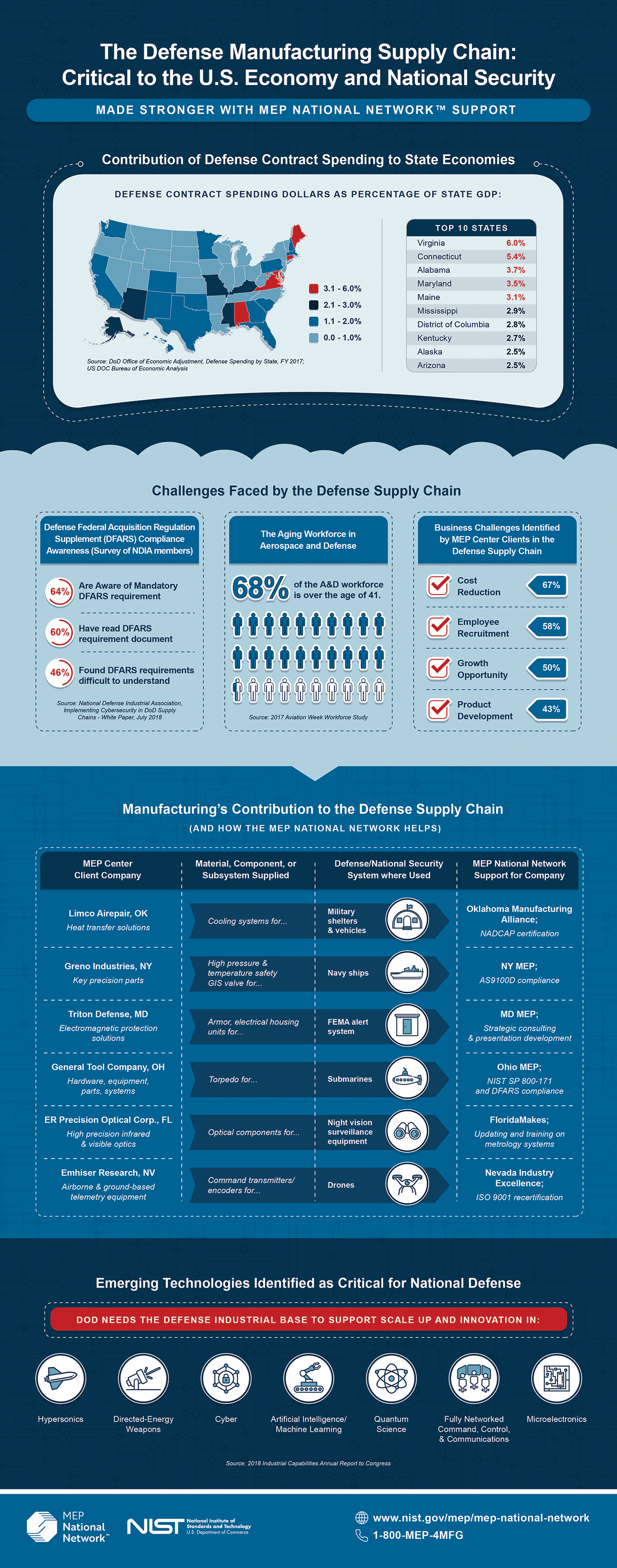

Their model could also be applied to other computer vision tasks, like image classification. They designed EfficientViT with a hardware-friendly architecture, so it could be easier to run on different types of devices, such as virtual reality headsets or the edge computers on autonomous vehicles. “The most critical part here is that we need to carefully balance the performance and the efficiency,” Cai says. The second, a module that enables multiscale learning, helps the model recognize both large and small objects. One of those elements helps the model capture local feature interactions, mitigating the linear function’s weakness in local information extraction. To compensate for that accuracy loss, the researchers included two extra components in their model, each of which adds only a small amount of computation. The linear attention only captures global context about the image, losing local information, which makes the accuracy worse,” Han says. With their model, the amount of computation needed for a prediction grows linearly as the image resolution grows. As such, they can rearrange the order of operations to reduce total calculations without changing functionality and losing the global receptive field. In their new model series, called EfficientViT, the MIT researchers used a simpler mechanism to build the attention map - replacing the nonlinear similarity function with a linear similarity function. Because of this, the amount of computation grows quadratically as the resolution of the image increases. Since a high-resolution image may contain millions of pixels, chunked into thousands of patches, the attention map quickly becomes enormous. In this way, the model develops what is known as a global receptive field, which means it can access all the relevant parts of the image.

In generating this attention map, the model uses a similarity function that directly learns the interaction between each pair of pixels. Using the same concept, a vision transformer chops an image into patches of pixels and encodes each small patch into a token before generating an attention map. This attention map helps the model understand context when it makes predictions. In that context, they encode each word in a sentence as a token and then generate an attention map, which captures each token’s relationships with all other tokens. Transformers were originally developed for natural language processing. A powerful new type of model, known as a vision transformer, has recently been used effectively. The research will be presented at the International Conference on Computer Vision.Ĭategorizing every pixel in a high-resolution image that may have millions of pixels is a difficult task for a machine-learning model.

He is joined on the paper by lead author Han Cai, an EECS graduate student Junyan Li, an undergraduate at Zhejiang University Muyan Hu, an undergraduate student at Tsinghua University and Chuang Gan, a principal research staff member at the MIT-IBM Watson AI Lab. Our work shows that it is possible to drastically reduce the computation so this real-time image segmentation can happen locally on a device,” says Song Han, an associate professor in the Department of Electrical Engineering and Computer Science (EECS), a member of the MIT-IBM Watson AI Lab, and senior author of the paper describing the new model.

“While researchers have been using traditional vision transformers for quite a long time, and they give amazing results, we want people to also pay attention to the efficiency aspect of these models. Not only could this technique be used to help autonomous vehicles make decisions in real-time, it could also improve the efficiency of other high-resolution computer vision tasks, such as medical image segmentation. Importantly, this new model series exhibited the same or better accuracy than these alternatives. The result is a new model series for high-resolution computer vision that performs up to nine times faster than prior models when deployed on a mobile device. The MIT researchers designed a new building block for semantic segmentation models that achieves the same abilities as these state-of-the-art models, but with only linear computational complexity and hardware-efficient operations. Because of this, while these models are accurate, they are too slow to process high-resolution images in real time on an edge device like a sensor or mobile phone. Recent state-of-the-art semantic segmentation models directly learn the interaction between each pair of pixels in an image, so their calculations grow quadratically as image resolution increases.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed